Micro-Project - A HubSpot friend asked me for a script to parse XML sitemaps, so I built him a web app...

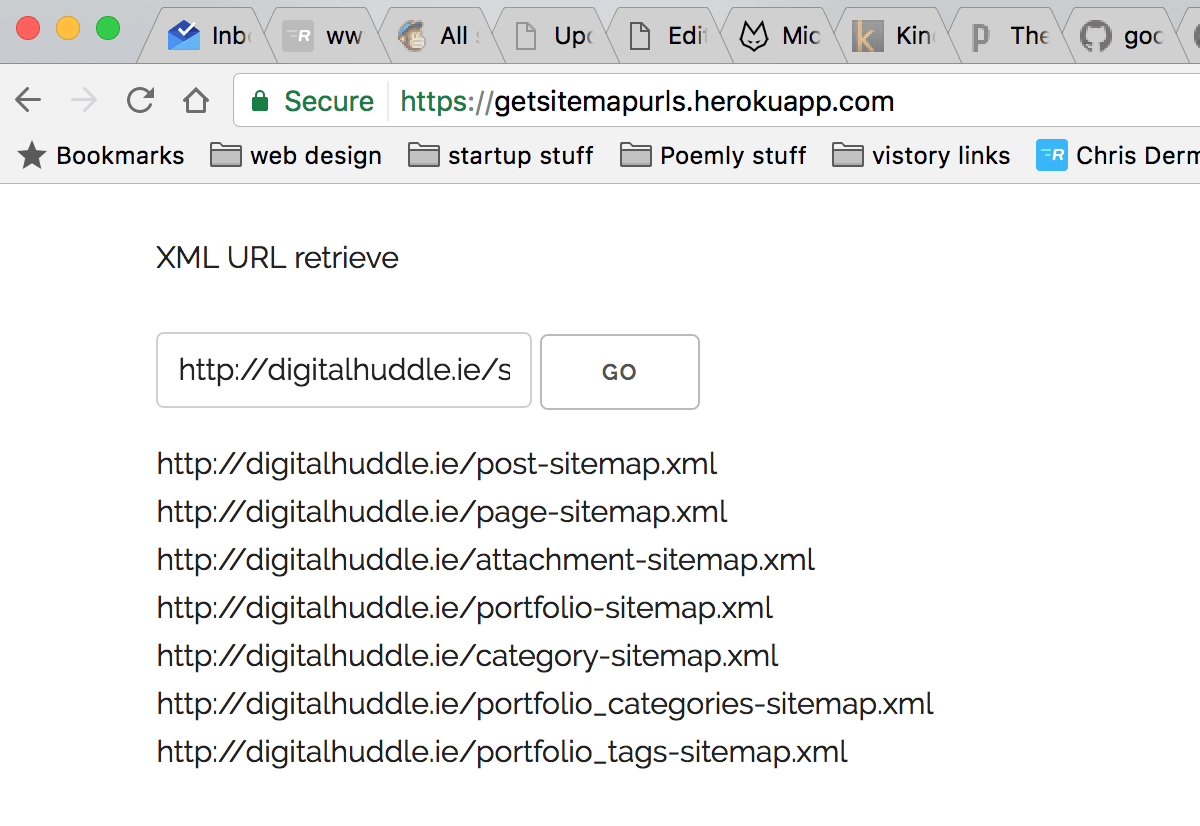

TL;DR The tool I built in 4 hours to extract all URLs from a site’s sitemap.xml file

A good friend of mine, Craig Ellis, is a Sales Engineer in HubSpot. I recently built him a project that helped showcase the power of HubSpot’s API to potential customers, so when he noticed he and his team were spending quite a bit of time manually retrieving URLs from XML sitemaps, he came to me to see if I could help.

I love automation, and I love distractions even more. Naturally, I said I’d give it a go.

To Python or not to Python

My mind immediately went to Python, and within minutes I had a StackOverflow question and answer with a few quick lines of Python code that would take in a file and spit out the URL’s within it.

Ok cool. Craig is fairly technical, he can probably pop a terminal open and run the script manually. But what if his team isn’t as technical. Also, wouldn’t this be a little more impressive if it was web-based… Yes, the answer to that is yes. 🙂

Do I need node.js?

My mind shifted to node. Maybe I could spin up a simple server on Heroku that handled requests to site URL’s and processed the response. I’d already used different parsing libraries for Jobb.ie, so it wasn’t totally new to me. With XML being a staple language of the internet for decades, I was confident there’d be a suitable parsing option.

However, I didn’t particularly want to build a backend and frontend. This is an unpaid gig, so efficiency is key. Also, wouldn’t handling it all on the frontend be more impressive? Yes, yes it would. 🙂

Can this actually be *entirely *client-side??

I wasn’t sure. I mean, it’s just a request. We send an AJAX request to the appropriate URL, we get back the XML, and we parse out the URLs. It seemed simple, a little *too *simple. I was sceptical. A proof of concept was in order.

jsFiddle to the rescue

jsFiddle is magnificent, and the perfect place to experiment and prove or disprove my thought process. I could even potentially share it with Craig for real-time feedback. I added jQuery as a resource via CDN and got to work.

Blast! CORS thwarting my efforts. When will we learn that internet security just doesn’t work! I joke, always be secure in your online endeavours kids. But wait, maybe we can get around this, with some JSONP… nope! The browser won’t let us load non-https on a https site. This is for security reasons and although frustrating now, I’m glad it’s a thing. Good browser.

YO! Build me a server

Yeoman is a generator I use to quickly spin up the necessary files and folder structure for different types of projects. Anything from a chrome extension to an Express.js server in node (which is what I’m doing now). It can all be done via the command line with yeoman. It’s a pretty nifty piece of kit. I pop it open and run a few commands, and voila, a server.

For quick and dirty projects like this, I love using the Skeleton CSS boilerplate code, from getskeleton.com, a tiny, responsive CSS framework that looks really fresh and simple.

Wrangling with Cheerio.js

There was some…. back and forth, lets say. It’s been a couple of months since I worked in node, and about 8 months since I worked with Cheerio, so it took a little longer than it should have to wrestle it into working. But, eventually, I got it working veeery basically. But hey.

ps – check out this article for how to scrape the web with node and Cheerio, it’s where I learned it – https://scotch.io/tutorials/scraping-the-web-with-node-js

It’s a good thing I have no life

I hate you Craig.

I hate you Craig.

2 hours and 50 minutes later, she lives!

At 04:12am I pushed the very, very minimal app to Heroku. It’s a little rough, but it works (at least on the sample sitemap link I was using for development).

At 04:12am I pushed the very, very minimal app to Heroku. It’s a little rough, but it works (at least on the sample sitemap link I was using for development).

I’m gonna make some minor tweaks to the project as time goes on, such as

- Google Analytics

- Loading spinner from loading.io

- Proper form submit operation

- Copy all results button

- Some HubSpot colour perhaps?

- Feedback form for bugs and improvements

But that can wait for the morning. 🙂

Check the tool out in its current form and let me know how it can be improved https://getsitemapurls.herokuapp.com/